Mitigating Divergence of Latent Factors via Dual Ascent for Low Latency Event Prediction Models

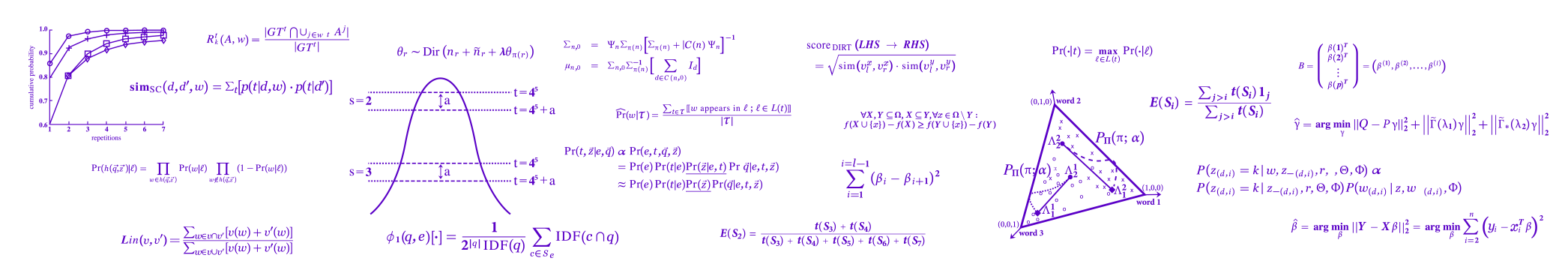

Real-world content recommendation marketplaces exhibit certain behaviors and are imposed by constraints that are not always apparent in common static offline data sets. One example that is common in ad marketplaces is swift ad turnover. New ads are introduced and old ads disappear at high rates every day. Another example is ad discontinuity, where existing ads may appear and disappear from the market for non negligible amounts of time due to a variety of reasons (e.g., depletion of budget, pausing by the advertiser, flagging by the system, and more). These behaviors sometimes cause the model's loss surface to change dramatically over short periods of time. To address these behaviors, fresh models are highly important, and to achieve this (and for several other reasons) incremental training on small chunks of past events is often employed. These behaviors and algorithmic optimizations occasionally cause model parameters to grow uncontrollably large, or \emph{diverge}. In this work present a systematic method to prevent model parameters from diverging by imposing a carefully chosen set of constraints on the model's latent vectors. We then devise a method inspired by primal-dual optimization algorithms to fulfill these constraints in a manner which both aligns well with incremental model training, and does not require any major modifications to the underlying model training algorithm.We analyze, demonstrate, and motivate our method on OFFSET, a collaborative filtering algorithm which drives Yahoo native advertising, which is one of VZM's largest and faster growing businesses, reaching a run-rate of many hundreds of millions USD per year. Finally, we conduct an online experiment which shows a substantial reduction in the number of diverging instances, and a significant improvement to both user experience and revenue.